Portal:Computer programming

| This portal or section is in a state of significant expansion or restructuring. You are welcome to assist in its construction by editing it as well. If this portal has not been edited in several days, please remove this template. If you are the editor who added this template and you are actively editing, please be sure to replace this template with {{in use}} during the active editing session. Click on the link for template parameters to use.

This page was last edited by Northamerica1000 (talk | contribs) 3 years ago. (Update timer) |

Portal maintenance status: (September 2019)

|

The Computer Programming Portal

Computer programming or coding is the composition of sequences of instructions, called programs, that computers can follow to perform tasks. It involves designing and implementing algorithms, step-by-step specifications of procedures, by writing code in one or more programming languages. Programmers typically use high-level programming languages that are more easily intelligible to humans than machine code, which is directly executed by the central processing unit. Proficient programming usually requires expertise in several different subjects, including knowledge of the application ___domain, details of programming languages and generic code libraries, specialized algorithms, and formal logic.

Auxiliary tasks accompanying and related to programming include analyzing requirements, testing, debugging (investigating and fixing problems), implementation of build systems, and management of derived artifacts, such as programs' machine code. While these are sometimes considered programming, often the term software development is used for this larger overall process – with the terms programming, implementation, and coding reserved for the writing and editing of code per se. Sometimes software development is known as software engineering, especially when it employs formal methods or follows an engineering design process. (Full article...)

Selected articles - load new batch

-

Image 1Artificial intelligence (AI) is the capability of computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. It is a field of research in computer science that develops and studies methods and software that enable machines to perceive their environment and use learning and intelligence to take actions that maximize their chances of achieving defined goals.

High-profile applications of AI include advanced web search engines (e.g., Google Search); recommendation systems (used by YouTube, Amazon, and Netflix); virtual assistants (e.g., Google Assistant, Siri, and Alexa); autonomous vehicles (e.g., Waymo); generative and creative tools (e.g., language models and AI art); and superhuman play and analysis in strategy games (e.g., chess and Go). However, many AI applications are not perceived as AI: "A lot of cutting edge AI has filtered into general applications, often without being called AI because once something becomes useful enough and common enough it's not labeled AI anymore."

Various subfields of AI research are centered around particular goals and the use of particular tools. The traditional goals of AI research include learning, reasoning, knowledge representation, planning, natural language processing, perception, and support for robotics. To reach these goals, AI researchers have adapted and integrated a wide range of techniques, including search and mathematical optimization, formal logic, artificial neural networks, and methods based on statistics, operations research, and economics. AI also draws upon psychology, linguistics, philosophy, neuroscience, and other fields. Some companies, such as OpenAI, Google DeepMind and Meta, aim to create artificial general intelligence (AGI)—AI that can complete virtually any cognitive task at least as well as a human. (Full article...) -

Image 2

Scala (/ˈskɑːlɑː/ SKAH-lah) is a strongly statically typed high-level general-purpose programming language that supports both object-oriented programming and functional programming. Designed to be concise, many of Scala's design decisions are intended to address criticisms of Java.

Scala source code can be compiled to Java bytecode and run on a Java virtual machine (JVM). Scala can also be transpiled to JavaScript to run in a browser, or compiled directly to a native executable. When running on the JVM, Scala provides language interoperability with Java so that libraries written in either language may be referenced directly in Scala or Java code. Like Java, Scala is object-oriented, and uses a syntax termed curly-brace which is similar to the language C. Since Scala 3, there is also an option to use the off-side rule (indenting) to structure blocks, and its use is advised. Martin Odersky has said that this turned out to be the most productive change introduced in Scala 3.

Unlike Java, Scala has many features of functional programming languages (like Scheme, Standard ML, and Haskell), including currying, immutability, lazy evaluation, and pattern matching. It also has an advanced type system supporting algebraic data types, covariance and contravariance, higher-order types (but not higher-rank types), anonymous types, operator overloading, optional parameters, named parameters, raw strings, and an experimental exception-only version of algebraic effects that can be seen as a more powerful version of Java's checked exceptions. (Full article...) -

Image 3

Yoshua Bengio OC OQ FRS FRSC (born March 5, 1964) is a Canadian computer scientist, and a pioneer of artificial neural networks and deep learning. He is a professor at the Université de Montréal and scientific director of the AI institute MILA.

Bengio received the 2018 ACM A.M. Turing Award, often referred to as the "Nobel Prize of Computing", together with Geoffrey Hinton and Yann LeCun, for their foundational work on deep learning. Bengio, Hinton, and LeCun are sometimes referred to as the "Godfathers of AI". Bengio is the most-cited computer scientist globally (by both total citations and by h-index), and the most-cited living scientist across all fields (by total citations). In 2024, TIME Magazine included Bengio in its yearly list of the world's 100 most influential people. (Full article...) -

Image 4

A 12-row/80-column IBM punched card from the mid-twentieth century

A punched card (also known as a punch card or Hollerith card) is a stiff paper-based medium used to store digital information through the presence or absence of holes in predefined positions. Developed from earlier uses in textile looms such as the Jacquard loom (1800s), the punched card was first widely implemented in data processing by Herman Hollerith for the 1890 United States Census. His innovations led to the formation of companies that eventually became IBM.

Punched cards became essential to business, scientific, and governmental data processing during the 20th century, especially in unit record machines and early digital computers. The most well-known format was the IBM 80-column card introduced in 1928, which became an industry standard. Cards were used for data input, storage, and software programming. Though rendered obsolete by magnetic media and terminals by the 1980s, punched cards influenced lasting conventions such as the 80-character line length in computing, and as of 2012, were still used in some voting machines to record votes. Today, they are remembered as icons of early automation and computing history. Their legacy persists in modern computing, notably influencing the 80-character line standard in command-line interfaces and programming environments. (Full article...) -

Image 5

Eiffel is an object-oriented programming language designed by Bertrand Meyer (an object-orientation proponent and author of Object-Oriented Software Construction) and Eiffel Software. Meyer conceived the language in 1985 with the goal of increasing the reliability of commercial software development. The first version was released in 1986. In 2005, the International Organization for Standardization (ISO) released a technical standard for Eiffel.

The design of the language is closely connected with the Eiffel programming method. Both are based on a set of principles, including design by contract, command–query separation, the uniform-access principle, the single-choice principle, the open–closed principle, and option–operand separation.

Many concepts initially introduced by Eiffel were later added into Java, C#, and other languages. New language design ideas, particularly through the Ecma/ISO standardization process, continue to be incorporated into the Eiffel language. (Full article...) -

Image 6

tcsh and sh shell windows on a Mac OS X Leopard desktop

A Unix shell is a shell that provides a command-line user interface for a Unix-like operating system. A Unix shell provides a command language that can be used either interactively or for writing a shell script. A user typically interacts with a Unix shell via a terminal emulator; however, direct access via serial hardware connections or Secure Shell are common for server systems. Although use of a Unix shell is popular with some users, others prefer to use a windowing system such as desktop Linux distribution or macOS instead of a command-line interface.

A user may have access to multiple Unix shells with one configured to run by default when the user logs in interactively. The default selection is typically stored in a user's profile; for example, in the local passwd file or in a distributed configuration system such as NIS or LDAP. A user may use other shells nested inside the default shell.

A Unix shell may provide many features including: variable definition and substitution, command substitution, filename wildcarding, stream piping, control flow structures (condition-testing and iteration), working directory context, and here document. (Full article...) -

Image 7

Perl is a high-level, general-purpose, interpreted, dynamic programming language. Though Perl is not officially an acronym, there are various backronyms in use, including "Practical Extraction and Reporting Language".

Perl was developed by Larry Wall in 1987 as a general-purpose Unix scripting language to make report processing easier. Since then, it has undergone many changes and revisions. Perl originally was not capitalized and the name was changed to being capitalized by the time Perl 4 was released. The latest release is Perl 5, first released in 1994. From 2000 to October 2019 a sixth version of Perl was in development; the sixth version's name was changed to Raku. Both languages continue to be developed independently by different development teams which liberally borrow ideas from each other.

Perl borrows features from other programming languages including C, sh, AWK, and sed. It provides text processing facilities without the arbitrary data-length limits of many contemporary Unix command line tools. Perl is a highly expressive programming language: source code for a given algorithm can be short and highly compressible. (Full article...) -

Image 8COBOL (/ˈkoʊbɒl, -bɔːl/; an acronym for "common business-oriented language") is a compiled English-like computer programming language designed for business use. It is an imperative, procedural, and, since 2002, object-oriented language. COBOL is primarily used in business, finance, and administrative systems for companies and governments. COBOL is still widely used in applications deployed on mainframe computers, such as large-scale batch and transaction processing jobs. Many large financial institutions were developing new systems in the language as late as 2006, but most programming in COBOL today is purely to maintain existing applications. Programs are being moved to new platforms, rewritten in modern languages, or replaced with other software.

COBOL was designed in 1959 by CODASYL and was partly based on the programming language FLOW-MATIC, designed by Grace Hopper. It was created as part of a U.S. Department of Defense effort to create a portable programming language for data processing. It was originally seen as a stopgap, but the Defense Department promptly pressured computer manufacturers to provide it, resulting in its widespread adoption. It was standardized in 1968 and has been revised five times. Expansions include support for structured and object-oriented programming. The current standard is ISO/IEC 1989:2023.

COBOL statements have prose syntax such asMOVE x TO y, which was designed to be self-documenting and highly readable. However, it is verbose and uses over 300 reserved words compared to the succinct and mathematically inspired syntax of other languages. (Full article...) -

Image 9

A Blender screenshot displaying Suzanne, a 3D test model

Computer graphics deals with generating images and art with the aid of computers. Computer graphics is a core technology in digital photography, film, video games, digital art, cell phone and computer displays, and many specialized applications. A great deal of specialized hardware and software has been developed, with the displays of most devices being driven by computer graphics hardware. It is a vast and recently developed area of computer science. The phrase was coined in 1960 by computer graphics researchers Verne Hudson and William Fetter of Boeing. It is often abbreviated as CG, or typically in the context of film as computer generated imagery (CGI). The non-artistic aspects of computer graphics are the subject of computer science research.

Some topics in computer graphics include user interface design, sprite graphics, raster graphics, rendering, ray tracing, geometry processing, computer animation, vector graphics, 3D modeling, shaders, GPU design, implicit surfaces, visualization, scientific computing, image processing, computational photography, scientific visualization, computational geometry and computer vision, among others. The overall methodology depends heavily on the underlying sciences of geometry, optics, physics, and perception.

Computer graphics is responsible for displaying art and image data effectively and meaningfully to the consumer. It is also used for processing image data received from the physical world, such as photo and video content. Computer graphics development has had a significant impact on many types of media and has revolutionized animation, movies, advertising, and video games in general. (Full article...) -

Image 10Ronald Paul "Ron" Fedkiw (born February 27, 1968) is a full professor in the Stanford University department of computer science and a leading researcher in the field of computer graphics, focusing on topics relating to physically based simulation of natural phenomena and machine learning. His techniques have been employed in many motion pictures. He has earned recognition at the 80th Academy Awards and the 87th Academy Awards as well as from the National Academy of Sciences.

His first Academy Award was awarded for developing techniques that enabled many technically sophisticated adaptations including the visual effects in 21st century movies in the Star Wars, Harry Potter, Terminator, and Pirates of the Caribbean franchises. Fedkiw has designed a platform that has been used to create many of the movie world's most advanced special effects since it was first used on the T-X character in Terminator 3: Rise of the Machines. His second Academy Award was awarded for computer graphics techniques for special effects for large scale destruction. Although he has won an Oscar for his work, he does not design the visual effects that use his technique. Instead, he has developed a system that other award-winning technicians and engineers have used to create visual effects for some of the world's most expensive and highest-grossing movies. (Full article...) -

Image 11In C++ computer programming, allocators are a component of the C++ Standard Library. The standard library provides several data structures, such as list and set, commonly referred to as containers. A common trait among these containers is their ability to change size during the execution of the program. To achieve this, some form of dynamic memory allocation is usually required. Allocators handle all the requests for allocation and deallocation of memory for a given container. The C++ Standard Library provides general-purpose allocators that are used by default; however, custom allocators may also be supplied by the programmer.

Allocators were invented by Alexander Stepanov as part of the Standard Template Library (STL). They were originally intended as a means to make the library more flexible and independent of the underlying memory model, allowing programmers to utilize custom pointer and reference types with the library. However, in the process of adopting STL into the C++ standard, the C++ standardization committee realized that a complete abstraction of the memory model would incur unacceptable performance penalties. To remedy this, the requirements of allocators were made more restrictive. As a result, the level of customization provided by allocators is more limited than was originally envisioned by Stepanov.

Nevertheless, there are many scenarios where customized allocators are desirable. Some of the most common reasons for writing custom allocators include improving performance of allocations by using memory pools, and encapsulating access to different types of memory, like shared memory or garbage-collected memory. In particular, programs with many frequent allocations of small amounts of memory may benefit greatly from specialized allocators, both in terms of running time and memory footprint. (Full article...) -

Image 12

Python is a high-level, general-purpose programming language. Its design philosophy emphasizes code readability with the use of significant indentation.

Python is dynamically type-checked and garbage-collected. It supports multiple programming paradigms, including structured (particularly procedural), object-oriented and functional programming.

Guido van Rossum began working on Python in the late 1980s as a successor to the ABC programming language. Python 3.0, released in 2008, was a major revision not completely backward-compatible with earlier versions. Recent versions, such as Python 3.12, have added capabilites and keywords for typing (and more; e.g. increasing speed); helping with (optional) static typing. Currently only versions in the 3.x series are supported. (Full article...) -

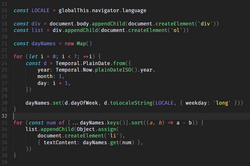

Image 13

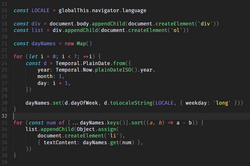

Source code for a computer program written in the JavaScript language. It demonstrates the appendChild method. The method adds a new child node to an existing parent node. It is commonly used to dynamically modify the structure of an HTML document.

A computer program is a sequence or set of instructions in a programming language for a computer to execute. It is one component of software, which also includes documentation and other intangible components.

A computer program in its human-readable form is called source code. Source code needs another computer program to execute because computers can only execute their native machine instructions. Therefore, source code may be translated to machine instructions using a compiler written for the language. (Assembly language programs are translated using an assembler.) The resulting file is called an executable. Alternatively, source code may execute within an interpreter written for the language.

If the executable is requested for execution, then the operating system loads it into memory and starts a process. The central processing unit will soon switch to this process so it can fetch, decode, and then execute each machine instruction. (Full article...) -

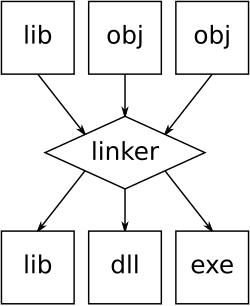

Image 14

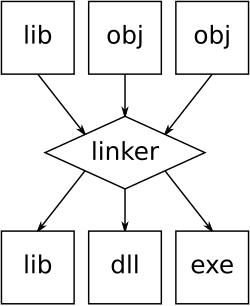

An illustration of the linking process. Object files and static libraries are assembled into a new library or executable

A linker or link editor is a computer program that combines intermediate software build files such as object and library files into a single executable file such as a program or library. A linker is often part of a toolchain that includes a compiler and/or assembler that generates intermediate files that the linker processes. The linker may be integrated with other toolchain tools such that the user does not interact with the linker directly.

A simpler version that writes its output directly to memory is called the loader, though loading is typically considered a separate process. (Full article...) -

Image 15

In computing, assembly language (alternatively assembler language or symbolic machine code), often referred to simply as assembly and commonly abbreviated as ASM or asm, is any low-level programming language with a very strong correspondence between the instructions in the language and the architecture's machine code instructions. Assembly language usually has one statement per machine code instruction (1:1), but constants, comments, assembler directives, symbolic labels of, e.g., memory locations, registers, and macros are generally also supported.

The first assembly code in which a language is used to represent machine code instructions is found in Kathleen and Andrew Donald Booth's 1947 work, Coding for A.R.C.. Assembly code is converted into executable machine code by a utility program referred to as an assembler. The term "assembler" is generally attributed to Wilkes, Wheeler and Gill in their 1951 book The Preparation of Programs for an Electronic Digital Computer, who, however, used the term to mean "a program that assembles another program consisting of several sections into a single program". The conversion process is referred to as assembly, as in assembling the source code. The computational step when an assembler is processing a program is called assembly time.

Because assembly depends on the machine code instructions, each assembly language is specific to a particular computer architecture such as x86 or ARM. (Full article...)

Selected images

-

Image 1An IBM Port-A-Punch punched card

-

Image 2Margaret Hamilton standing next to the navigation software that she and her MIT team produced for the Apollo Project.

-

Image 3Grace Hopper at the UNIVAC keyboard, c. 1960. Grace Brewster Murray: American mathematician and rear admiral in the U.S. Navy who was a pioneer in developing computer technology, helping to devise UNIVAC I. the first commercial electronic computer, and naval applications for COBOL (common-business-oriented language).

-

Image 4A head crash on a modern hard disk drive

-

Image 5Output from a (linearised) shallow water equation model of water in a bathtub. The water experiences 5 splashes which generate surface gravity waves that propagate away from the splash locations and reflect off of the bathtub walls.

-

Image 8Deep Blue was a chess-playing expert system run on a unique purpose-built IBM supercomputer. It was the first computer to win a game, and the first to win a match, against a reigning world champion under regular time controls. Photo taken at the Computer History Museum.

-

Image 9Stephen Wolfram is a British-American computer scientist, physicist, and businessman. He is known for his work in computer science, mathematics, and in theoretical physics.

-

Image 10Ada Lovelace was an English mathematician and writer, chiefly known for her work on Charles Babbage's proposed mechanical general-purpose computer, the Analytical Engine. She was the first to recognize that the machine had applications beyond pure calculation, and to have published the first algorithm intended to be carried out by such a machine. As a result, she is often regarded as the first computer programmer.

-

Image 11GNOME Shell, GNOME Clocks, Evince, gThumb and GNOME Files at version 3.30, in a dark theme

-

Image 13Partial view of the Mandelbrot set. Step 1 of a zoom sequence: Gap between the "head" and the "body" also called the "seahorse valley".

Did you know? - load more entries

- ... that the Gale–Shapley algorithm was used to assign medical students to residencies long before its publication by Gale and Shapley?

- ... that a "hacker" with blog posts written by ChatGPT was at the center of an online scavenger hunt promoting Avenged Sevenfold's album Life Is but a Dream...?

- ... that according to the Open Syllabus Project, Diana Hacker is the second most-read female author on college campuses after Kate L. Turabian?

- ... that NATO was once targeted by a group of "gay furry hackers"?

- ... that a pink skin for Mercy in the video game Overwatch helped raise more than $12 million for breast cancer research?

- ... that both Thackeray and Longfellow bought paintings by Fanny Steers?

Subcategories

WikiProjects

- There are many users interested in computer programming, join them.

Computer programming news

No recent news

Topics

Related portals

Associated Wikimedia

The following Wikimedia Foundation sister projects provide more on this subject:

-

Commons

Free media repository -

Wikibooks

Free textbooks and manuals -

Wikidata

Free knowledge base -

Wikinews

Free-content news -

Wikiquote

Collection of quotations -

Wikisource

Free-content library -

Wikiversity

Free learning tools -

Wiktionary

Dictionary and thesaurus