Portal:Computer programming

→

Portal maintenance status: (September 2019)

|

The Computer Programming Portal

Computer programming or coding is the composition of sequences of instructions, called programs, that computers can follow to perform tasks. It involves designing and implementing algorithms, step-by-step specifications of procedures, by writing code in one or more programming languages. Programmers typically use high-level programming languages that are more easily intelligible to humans than machine code, which is directly executed by the central processing unit. Proficient programming usually requires expertise in several different subjects, including knowledge of the application ___domain, details of programming languages and generic code libraries, specialized algorithms, and formal logic.

Auxiliary tasks accompanying and related to programming include analyzing requirements, testing, debugging (investigating and fixing problems), implementation of build systems, and management of derived artifacts, such as programs' machine code. While these are sometimes considered programming, often the term software development is used for this larger overall process – with the terms programming, implementation, and coding reserved for the writing and editing of code per se. Sometimes software development is known as software engineering, especially when it employs formal methods or follows an engineering design process. (Full article...)

Selected articles - load new batch

-

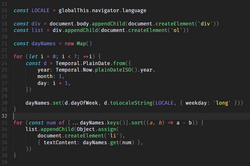

Image 1

C# (/ˌsiː ˈʃɑːrp/ see SHARP) is a general-purpose high-level programming language supporting multiple paradigms. C# encompasses static typing, strong typing, lexically scoped, imperative, declarative, functional, generic, object-oriented (class-based), and component-oriented programming disciplines.

The principal inventors of the C# programming language were Anders Hejlsberg, Scott Wiltamuth, and Peter Golde from Microsoft. It was first widely distributed in July 2000 and was later approved as an international standard by Ecma (ECMA-334) in 2002 and ISO/IEC (ISO/IEC 23270 and 20619) in 2003. Microsoft introduced C# along with .NET Framework and Microsoft Visual Studio, both of which are technically speaking, closed-source. At the time, Microsoft had no open-source products. Four years later, in 2004, a free and open-source project called Microsoft Mono began, providing a cross-platform compiler and runtime environment for the C# programming language. A decade later, Microsoft released Visual Studio Code (code editor), Roslyn (compiler), and the unified .NET platform (software framework), all of which support C# and are free, open-source, and cross-platform. Mono also joined Microsoft but was not merged into .NET.

As of January 2025,[update] the most recent stable version of the language is C# 13.0, which was released in 2024 in .NET 9.0 (Full article...) -

Image 2

Perl is a high-level, general-purpose, interpreted, dynamic programming language. Though Perl is not officially an acronym, there are various backronyms in use, including "Practical Extraction and Reporting Language".

Perl was developed by Larry Wall in 1987 as a general-purpose Unix scripting language to make report processing easier. Since then, it has undergone many changes and revisions. Perl originally was not capitalized and the name was changed to being capitalized by the time Perl 4 was released. The latest release is Perl 5, first released in 1994. From 2000 to October 2019 a sixth version of Perl was in development; the sixth version's name was changed to Raku. Both languages continue to be developed independently by different development teams which liberally borrow ideas from each other.

Perl borrows features from other programming languages including C, sh, AWK, and sed. It provides text processing facilities without the arbitrary data-length limits of many contemporary Unix command line tools. Perl is a highly expressive programming language: source code for a given algorithm can be short and highly compressible. (Full article...) -

Image 3

A 12-row/80-column IBM punched card from the mid-twentieth century

A punched card (also known as a punch card or Hollerith card) is a stiff paper-based medium used to store digital information through the presence or absence of holes in predefined positions. Developed from earlier uses in textile looms such as the Jacquard loom (1800s), the punched card was first widely implemented in data processing by Herman Hollerith for the 1890 United States Census. His innovations led to the formation of companies that eventually became IBM.

Punched cards became essential to business, scientific, and governmental data processing during the 20th century, especially in unit record machines and early digital computers. The most well-known format was the IBM 80-column card introduced in 1928, which became an industry standard. Cards were used for data input, storage, and software programming. Though rendered obsolete by magnetic media and terminals by the 1980s, punched cards influenced lasting conventions such as the 80-character line length in computing, and as of 2012, were still used in some voting machines to record votes. Today, they are remembered as icons of early automation and computing history. Their legacy persists in modern computing, notably influencing the 80-character line standard in command-line interfaces and programming environments. (Full article...) -

Image 4In computer systems a loader is the part of an operating system that is responsible for loading programs and libraries. It is one of the essential stages in the process of starting a program, as it places programs into memory and prepares them for execution. Loading a program involves either memory-mapping or copying the contents of the executable file containing the program instructions into memory, and then carrying out other required preparatory tasks to prepare the executable for running. Once loading is complete, the operating system starts the program by passing control to the loaded program code.

All operating systems that support program loading have loaders, apart from highly specialized computer systems that only have a fixed set of specialized programs. Embedded systems typically do not have loaders, and instead, the code executes directly from ROM or similar. In order to load the operating system itself, as part of booting, a specialized boot loader is used. In many operating systems, the loader resides permanently in memory, though some operating systems that support virtual memory may allow the loader to be located in a region of memory that is pageable.

In the case of operating systems that support virtual memory, the loader may not actually copy the contents of executable files into memory, but rather may simply declare to the virtual memory subsystem that there is a mapping between a region of memory allocated to contain the running program's code and the contents of the associated executable file. (See memory-mapped file.) The virtual memory subsystem is then made aware that pages with that region of memory need to be filled on demand if and when program execution actually hits those areas of unfilled memory. This may mean parts of a program's code are not actually copied into memory until they are actually used, and unused code may never be loaded into memory at all. (Full article...) -

Image 5Prolog is a logic programming language that has its origins in artificial intelligence, automated theorem proving, and computational linguistics.

Prolog has its roots in first-order logic, a formal logic. Unlike many other programming languages, Prolog is intended primarily as a declarative programming language: the program is a set of facts and rules, which define relations. A computation is initiated by running a query over the program.

Prolog was one of the first logic programming languages and remains the most popular such language today, with several free and commercial implementations available. The language has been used for theorem proving, expert systems, term rewriting, type systems, and automated planning, as well as its original intended field of use, natural language processing. (Full article...) -

Image 6

Logo since August 17, 2012

Microsoft is a multinational computer technology corporation. Microsoft was founded on April 4, 1975, by Bill Gates and Paul Allen in Albuquerque, New Mexico. Its current best-selling products are the Microsoft Windows operating system; Microsoft Office, a suite of productivity software; Xbox, a line of entertainment of games, music, and video; Bing, a line of search engines; and Microsoft Azure, a cloud services platform.

In 1980, Microsoft formed a partnership with IBM to bundle Microsoft's operating system with IBM computers; with that deal, IBM paid Microsoft a royalty for every sale. In 1985, IBM requested Microsoft to develop a new operating system for their computers called OS/2. Microsoft produced that operating system, but also continued to sell their own alternative, which proved to be in direct competition with OS/2. Microsoft Windows eventually overshadowed OS/2 in terms of sales. When Microsoft launched several versions of Microsoft Windows in the 1990s, they had captured over 90% market share of the world's personal computers.

As of June 30, 2015, Microsoft has a global annual revenue of US$86.83 billion (~$109 billion in 2023) and 128,076 employees worldwide. It develops, manufactures, licenses, and supports a wide range of software products for computing devices. (Full article...) -

Image 7

A Blender screenshot displaying Suzanne, a 3D test model

Computer graphics deals with generating images and art with the aid of computers. Computer graphics is a core technology in digital photography, film, video games, digital art, cell phone and computer displays, and many specialized applications. A great deal of specialized hardware and software has been developed, with the displays of most devices being driven by computer graphics hardware. It is a vast and recently developed area of computer science. The phrase was coined in 1960 by computer graphics researchers Verne Hudson and William Fetter of Boeing. It is often abbreviated as CG, or typically in the context of film as computer generated imagery (CGI). The non-artistic aspects of computer graphics are the subject of computer science research.

Some topics in computer graphics include user interface design, sprite graphics, raster graphics, rendering, ray tracing, geometry processing, computer animation, vector graphics, 3D modeling, shaders, GPU design, implicit surfaces, visualization, scientific computing, image processing, computational photography, scientific visualization, computational geometry and computer vision, among others. The overall methodology depends heavily on the underlying sciences of geometry, optics, physics, and perception.

Computer graphics is responsible for displaying art and image data effectively and meaningfully to the consumer. It is also used for processing image data received from the physical world, such as photo and video content. Computer graphics development has had a significant impact on many types of media and has revolutionized animation, movies, advertising, and video games in general. (Full article...) -

Image 8

MATLAB (Matrix Laboratory) is a proprietary multi-paradigm programming language and numeric computing environment developed by MathWorks. MATLAB allows matrix manipulations, plotting of functions and data, implementation of algorithms, creation of user interfaces, and interfacing with programs written in other languages.

Although MATLAB is intended primarily for numeric computing, an optional toolbox uses the MuPAD symbolic engine allowing access to symbolic computing abilities. An additional package, Simulink, adds graphical multi-___domain simulation and model-based design for dynamic and embedded systems.

As of 2020[update], MATLAB has more than four million users worldwide. They come from various backgrounds of engineering, science, and economics. As of 2017[update], more than 5000 global colleges and universities use MATLAB to support instruction and research. (Full article...) -

Image 9

In computing, assembly language (alternatively assembler language or symbolic machine code), often referred to simply as assembly and commonly abbreviated as ASM or asm, is any low-level programming language with a very strong correspondence between the instructions in the language and the architecture's machine code instructions. Assembly language usually has one statement per machine code instruction (1:1), but constants, comments, assembler directives, symbolic labels of, e.g., memory locations, registers, and macros are generally also supported.

The first assembly code in which a language is used to represent machine code instructions is found in Kathleen and Andrew Donald Booth's 1947 work, Coding for A.R.C.. Assembly code is converted into executable machine code by a utility program referred to as an assembler. The term "assembler" is generally attributed to Wilkes, Wheeler and Gill in their 1951 book The Preparation of Programs for an Electronic Digital Computer, who, however, used the term to mean "a program that assembles another program consisting of several sections into a single program". The conversion process is referred to as assembly, as in assembling the source code. The computational step when an assembler is processing a program is called assembly time.

Because assembly depends on the machine code instructions, each assembly language is specific to a particular computer architecture such as x86 or ARM. (Full article...) -

Image 10

Charles Babbage KH FRS (/ˈbæbɪdʒ/; 26 December 1791 – 18 October 1871) was an English polymath. A mathematician, philosopher, inventor and mechanical engineer, Babbage originated the concept of a digital programmable computer.

Babbage is considered by some to merit the title of "father of the computer". He is credited with inventing the first mechanical computer, the difference engine, that eventually led to more complex electronic designs, though all the essential ideas of modern computers are to be found in his analytical engine, programmed using a principle openly borrowed from the Jacquard loom. As part of his computer work, he also designed the first computer printers. He had a broad range of interests in addition to his work on computers, covered in his 1832 book Economy of Manufactures and Machinery. He was an important figure in the social scene in London, and is credited with importing the "scientific soirée" from France with his well-attended Saturday evening soirées. His varied work in other fields has led him to be described as "pre-eminent" among the many polymaths of his century.

Babbage, who died before the complete successful engineering of many of his designs, including his Difference Engine and Analytical Engine, remained a prominent figure in the ideating of computing. Parts of his incomplete mechanisms are on display in the Science Museum in London. In 1991, a functioning difference engine was constructed from the original plans. Built to tolerances achievable in the 19th century, the success of the finished engine indicated that Babbage's machine would have worked. (Full article...) -

Image 11

R is a programming language for statistical computing and data visualization. It has been widely adopted in the fields of data mining, bioinformatics, data analysis, and data science.

The core R language is extended by a large number of software packages, which contain reusable code, documentation, and sample data. Some of the most popular R packages are in the tidyverse collection, which enhances functionality for visualizing, transforming, and modelling data, as well as improves the ease of programming (according to the authors and users).

R is free and open-source software distributed under the GNU General Public License. The language is implemented primarily in C, Fortran, and R itself. Precompiled executables are available for the major operating systems (including Linux, MacOS, and Microsoft Windows). (Full article...) -

Image 12

Julia is a dynamic general-purpose programming language. As a high-level language, distinctive aspects of Julia's design include a type system with parametric polymorphism, the use of multiple dispatch as a core programming paradigm, just-in-time (JIT) compilation and a parallel garbage collection implementation. Notably Julia does not support classes with encapsulated methods but instead relies on the types of all of a function's arguments to determine which method will be called.

By default, Julia is run similarly to scripting languages, using its runtime, and allows for interactions, but Julia programs/source code can also optionally be sent to users in one ready-to-install/run file, which can be made quickly, not needing anything preinstalled.

Julia programs can reuse libraries from other languages (or itself be reused from other); Julia has a special no-boilerplate keyword allowing calling e.g. C, Fortran or Rust libraries, and e.g. PythonCall.jl uses it indirectly for you, and Julia (libraries) can also be called from other languages, e.g. Python and R, and several Julia packages have been made easily available from those languages, in the form of Python and R libraries for corresponding Julia packages. Calling in either direction has been implemented for many languages, not just those and C++. (Full article...) -

Image 13In C++ computer programming, allocators are a component of the C++ Standard Library. The standard library provides several data structures, such as list and set, commonly referred to as containers. A common trait among these containers is their ability to change size during the execution of the program. To achieve this, some form of dynamic memory allocation is usually required. Allocators handle all the requests for allocation and deallocation of memory for a given container. The C++ Standard Library provides general-purpose allocators that are used by default; however, custom allocators may also be supplied by the programmer.

Allocators were invented by Alexander Stepanov as part of the Standard Template Library (STL). They were originally intended as a means to make the library more flexible and independent of the underlying memory model, allowing programmers to utilize custom pointer and reference types with the library. However, in the process of adopting STL into the C++ standard, the C++ standardization committee realized that a complete abstraction of the memory model would incur unacceptable performance penalties. To remedy this, the requirements of allocators were made more restrictive. As a result, the level of customization provided by allocators is more limited than was originally envisioned by Stepanov.

Nevertheless, there are many scenarios where customized allocators are desirable. Some of the most common reasons for writing custom allocators include improving performance of allocations by using memory pools, and encapsulating access to different types of memory, like shared memory or garbage-collected memory. In particular, programs with many frequent allocations of small amounts of memory may benefit greatly from specialized allocators, both in terms of running time and memory footprint. (Full article...) -

Image 14Forth is a stack-oriented programming language and interactive integrated development environment designed by Charles H. "Chuck" Moore and first used by other programmers in 1970.

Although not an acronym, the language's name in its early years was often spelled in all capital letters as FORTH.

The FORTH-79 and FORTH-83 implementations, which were not written by Moore, became de facto standards, and an official technical standard of the language was published in 1994 as ANS Forth.

A wide range of Forth derivatives existed before and after ANS Forth.

The free and open-source software Gforth implementation is actively maintained, as are several commercially supported systems.

Forth typically combines a compiler with an integrated command shell, where the user interacts via subroutines called words.

Words can be defined, tested, redefined, and debugged without recompiling or restarting the whole program. All syntactic elements, including variables, operators, and control flow, are defined as words. A stack is used to pass parameters between words, leading to a Reverse Polish notation style. (Full article...) -

Image 15

JavaScript (JS) is a programming language and core technology of the web platform, alongside HTML and CSS. Ninety-nine percent of websites on the World Wide Web use JavaScript on the client side for webpage behavior.

Web browsers have a dedicated JavaScript engine that executes the client code. These engines are also utilized in some servers and a variety of apps. The most popular runtime system for non-browser usage is Node.js.

JavaScript is a high-level, often just-in-time–compiled language that conforms to the ECMAScript standard. It has dynamic typing, prototype-based object-orientation, and first-class functions. It is multi-paradigm, supporting event-driven, functional, and imperative programming styles. It has application programming interfaces (APIs) for working with text, dates, regular expressions, standard data structures, and the Document Object Model (DOM). (Full article...)

Selected images

-

Image 1Partial map of the Internet based on the January 15, 2005 data found on opte.org. Each line is drawn between two nodes, representing two IP addresses. The length of the lines are indicative of the delay between those two nodes. This graph represents less than 30% of the Class C networks reachable by the data collection program in early 2005.

-

Image 2Margaret Hamilton standing next to the navigation software that she and her MIT team produced for the Apollo Project.

-

Image 3An IBM Port-A-Punch punched card

-

Image 6A head crash on a modern hard disk drive

-

Image 7A lone house. An image made using Blender 3D.

-

Image 8Ada Lovelace was an English mathematician and writer, chiefly known for her work on Charles Babbage's proposed mechanical general-purpose computer, the Analytical Engine. She was the first to recognize that the machine had applications beyond pure calculation, and to have published the first algorithm intended to be carried out by such a machine. As a result, she is often regarded as the first computer programmer.

-

Image 10Partial view of the Mandelbrot set. Step 1 of a zoom sequence: Gap between the "head" and the "body" also called the "seahorse valley".

-

Image 11Grace Hopper at the UNIVAC keyboard, c. 1960. Grace Brewster Murray: American mathematician and rear admiral in the U.S. Navy who was a pioneer in developing computer technology, helping to devise UNIVAC I. the first commercial electronic computer, and naval applications for COBOL (common-business-oriented language).

-

Image 12Output from a (linearised) shallow water equation model of water in a bathtub. The water experiences 5 splashes which generate surface gravity waves that propagate away from the splash locations and reflect off of the bathtub walls.

-

Image 13Stephen Wolfram is a British-American computer scientist, physicist, and businessman. He is known for his work in computer science, mathematics, and in theoretical physics.

-

Image 14This image (when viewed in full size, 1000 pixels wide) contains 1 million pixels, each of a different color.

-

Image 15A view of the GNU nano Text editor version 6.0

-

Image 17GNOME Shell, GNOME Clocks, Evince, gThumb and GNOME Files at version 3.30, in a dark theme

-

Image 18Deep Blue was a chess-playing expert system run on a unique purpose-built IBM supercomputer. It was the first computer to win a game, and the first to win a match, against a reigning world champion under regular time controls. Photo taken at the Computer History Museum.

Did you know? - load more entries

- ... that Cornell University's student-oriented programming language dialect was made available to other universities but required a "research grant" payment in exchange?

- ... that both Thackeray and Longfellow bought paintings by Fanny Steers?

- ... that the Gale–Shapley algorithm was used to assign medical students to residencies long before its publication by Gale and Shapley?

- ... that a pink skin for Mercy in the video game Overwatch helped raise more than $12 million for breast cancer research?

- ... that a "hacker" with blog posts written by ChatGPT was at the center of an online scavenger hunt promoting Avenged Sevenfold's album Life Is but a Dream...?

- ... that the 2024 psychological horror game Mouthwashing utilises non-diegetic scene transitions that mimic glitches and crashes?

Subcategories

WikiProjects

- There are many users interested in computer programming, join them.

Computer programming news

No recent news

Topics

Related portals

Associated Wikimedia

The following Wikimedia Foundation sister projects provide more on this subject:

-

Commons

Free media repository -

Wikibooks

Free textbooks and manuals -

Wikidata

Free knowledge base -

Wikinews

Free-content news -

Wikiquote

Collection of quotations -

Wikisource

Free-content library -

Wikiversity

Free learning tools -

Wiktionary

Dictionary and thesaurus

135555555555555

644564

![Image 1 C# (/ˌsiː ˈʃɑːrp/ see SHARP) is a general-purpose high-level programming language supporting multiple paradigms. C# encompasses static typing, strong typing, lexically scoped, imperative, declarative, functional, generic, object-oriented (class-based), and component-oriented programming disciplines. The principal inventors of the C# programming language were Anders Hejlsberg, Scott Wiltamuth, and Peter Golde from Microsoft. It was first widely distributed in July 2000 and was later approved as an international standard by Ecma (ECMA-334) in 2002 and ISO/IEC (ISO/IEC 23270 and 20619) in 2003. Microsoft introduced C# along with .NET Framework and Microsoft Visual Studio, both of which are technically speaking, closed-source. At the time, Microsoft had no open-source products. Four years later, in 2004, a free and open-source project called Microsoft Mono began, providing a cross-platform compiler and runtime environment for the C# programming language. A decade later, Microsoft released Visual Studio Code (code editor), Roslyn (compiler), and the unified .NET platform (software framework), all of which support C# and are free, open-source, and cross-platform. Mono also joined Microsoft but was not merged into .NET. As of January 2025,[update] the most recent stable version of the language is C# 13.0, which was released in 2024 in .NET 9.0 (Full article...)](http://upload.wikimedia.org/wikipedia/en/d/d2/Blank.png)